When I read about science written in the regular press, I’m always very skeptical of the content of the article. It’s not that I don’t trust journalists, but in my experience, the words of scientists are usually hyped to levels that were definitely not there when the journalist discussed or read the research. And when I read titles like the one that I’m going to discuss below, it makes me even more skeptical:

World’s smallest LED could turn your phone camera into a high-res microscope. This is the title of the New Atlas article. And of course, I saw it shared in my social circles, and it caught my attention. I love microscopy and tend to read a lot about it to keep up with new technology for my work. It’s a part of my job that I really enjoy. So I went on and had a look at the article to see what was all about this new LED technology that will turn our iPhones into live-imaging tools.

I’ll focus on this excerpt from the article:

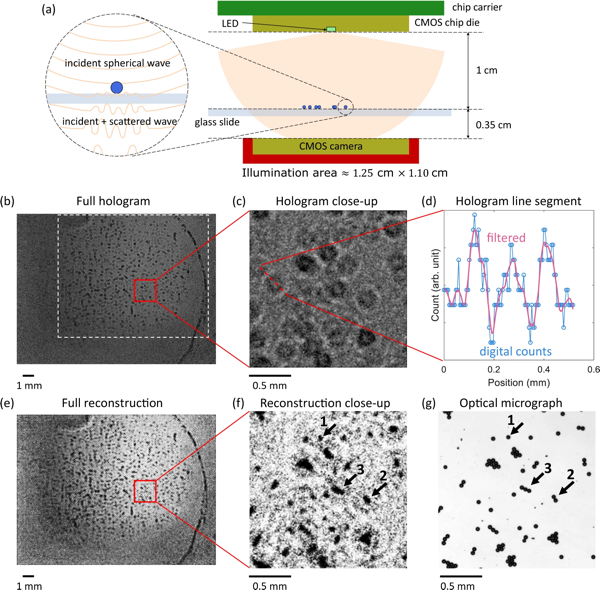

To test how their LED might be used in a real-world situation, they placed it into a lensless holographic microscope. Lensless microscopes are smaller than regular microscopes and less expensive because they don’t require complex, precise lens systems. They use a light source to illuminate a sample; the light is then scattered onto a CMOS digital image sensor, creating a digital hologram that a computer processes to produce an image.There can be difficulties with lensless holographic microscopy in reconstructing an image. Usually, an accurate reconstruction requires detailed knowledge of the aperture and wavelength of the source light and sample-to-sensor distance. To counter this difficulty, the researchers used a neural networking algorithm to reconstruct objects viewed by the holographic microscope. Neural networks are computer systems that mimic the networks of the human brain, relying on training data to learn and improve their accuracy over time.

The researchers found that their holographic lens provided more accurate high-resolution images than a regular optical microscope. They calculated that its resolution was approximately 20 micrometers (microns). For context, a human skin cell is 20 to 40 microns across; a white blood cell is about 30 microns.

The article then concludes:

The researchers see many applications for their next-gen CMOS-integrated micro-LED and neural network, including reconstructing microscopic objects such as human tissue samples and plant seeds. And the researchers say it can be used in existing smartphone cameras simply by modifying the phone’s silicone chip and software, converting the phone into a high-resolution microscope.

Ok, so they built a tiny LED and managed to use it to illuminate a microscopic latex sample to calculate the potential resolution of the system. And their microscope is roughly 1 cm wide (for Americans, that’s about 0.000109 Football fields). And that conclusion in the article is either the scientists overselling their technology to advertise their research or the journalist taking their words and twisting them into a “This will make your iPhone able to take pictures of your blood cells!”. The original research was published in Nature Communications, so I went there and read the article to read it first hand from the authors.

The image above, from the linked article, shows the schematics of the Holographic microscope they’ve set up and the resulting image acquired with it and reconstructed using computational imaging. The result definitely proves that they can acquire images that can show structures smaller than 20 μm, which is definitely at the cellular level. Still, the images are relatively bad quality and this is not a real microscope. And they’re resolving small latex microspheres, not cellular components, so definitely easier to reconstruct than complex structures. I’m not going to enter into the physics of light and optics, because it has a lot of technical jargon that is way out of the scope of this blog and article, but there’s a rule of thumb about microscopy: anything that tries to defy the limits of light and/or use a non-optimal setting for image quantification will have to use the computer to help reconstruct the images. And using the computer tends to introduce artifacts. We make advances almost daily in computational imaging and the results are impressive, but any new technology that promises so much always makes me skeptical, as it takes years (if not decades) to develop it to a level that is usable in a real environment. There are techniques that combine optics, physics, and computation to manage to break the size barrier that light sets. This is called super-resolution microscopy and it’s a fascinating topic that I’ll cover someday. But in this article, they’re not doing super-resolution. They’re also not breaking the limits of light resolution. They’re trying to miniaturize a microscope so that it can fit in a smartphone-sized lens and acquire images without optics involved. They have successfully created a light source that can deliver resolution up to the cellular level, comparable to a full-sized light microscope. But all of their work is (mostly) theoretical, without any complex biological structure being imaged. This, of course, is the first step in building a new generation of small microscopes and as a first step is very impressive. But saying that it will turn our phone cameras into microscopes is just trying to sell too much.

In the discussion, the authors definitely tone down their findings to a much more realistic level:

More advanced computational techniques can be used to improve the reconstruction. Besides the demonstrated application in holography, the presented LED is potentially useful in multiple other scenarios. For example, since the wavelength is within the minimum absorption window of biological tissues49, together with its high intensity and nanoscale emission area, the LED can be ideal for bio-imaging and bio-sensing applications, including near-field microscopy and implantable CMOS devices. Also, it is possible to integrate the LED with on-chip photodetectors and the LED can then find its applications in on-chip communication, NIR proximity sensing, and on-wafer testing of photonics.

I still would argue that the technology, while interesting, it is far from any real application in biology. Also, I tend to run away from any technology that through “computational techniques” extracts a sample that promises it would be comparable to a full-optical approach. Microscopes are able to extract a lot of information from samples through their high-quality objectives and illumination systems. Computational imaging is able to do wonders, but its applications are different and one can’t really compare them. I don’t say computational photography is bad. Heck, if you see the pictures our phones take nowadays, you have to admit that it is very impressive that such small lenses can get so close to what a full-fledged digital camera with an expensive lens can do. And I’m as confident as the authors that their advances will help in the future to scale down microscope sizes and find new applications, but we’re still very far from getting the same results with a 1 cm microscope as we would with a big optical microscope that takes a whole desk and is as expensive as a whole year of a graduate school researcher’s salary.

I guess what I’m trying to emphasize here is that science is slow, advances come always later than we want them and we should not hype something, as we risk losing faith in our work. In this era of misinformation, we should run away from eye-catching headlines and be more realistic, even if that means our articles won’t become viral.

References

- World’s smallest LED could turn your phone camera into a high-res microscope

- Li, Z., Xue, J., de Cea, M. et al. A sub-wavelength Si LED integrated in a CMOS platform. Nat Commun 14, 882 (2023). doi.org/10.1038/s…

- Daniel Merenich, Kathleen E. Van Manen-Brush, Christopher Janetopoulos, Kenneth A. Myers. Chapter 18 - Advanced microscopy techniques for the visualization and analysis of cell behaviors. Cell Movement in Health and Disease. Academic Press, 2022, Pages 303-321. ISBN 9780323901956, doi.org/10.1016/B…